You've done all the "best practices." Your React app uses code splitting, memoization, and sophisticated Server-Side Rendering (SSR). Yet, despite hitting impressive Lighthouse scores in development, your Real User Monitoring (RUM) data tells a different story: users on mid-range devices are still experiencing frustrating delays, janky interactions, and high bounce rates.

Why does performance remain elusive? Because the bottleneck has shifted. It's no longer just the network; it's the Parallel Paradox. Your users are holding multi-core supercomputers, but your code is trapped in a single-threaded lane.

It’s time to move past "Hardware Blindness" and embrace the Silicon Truth.

For years, web performance optimization focused heavily on the network. We optimized images, minified assets, implemented CDNs, and embraced HTTP/2. These efforts paid off, drastically reducing initial load times. However, as applications grew more complex, especially with frameworks like React, the bottleneck moved.

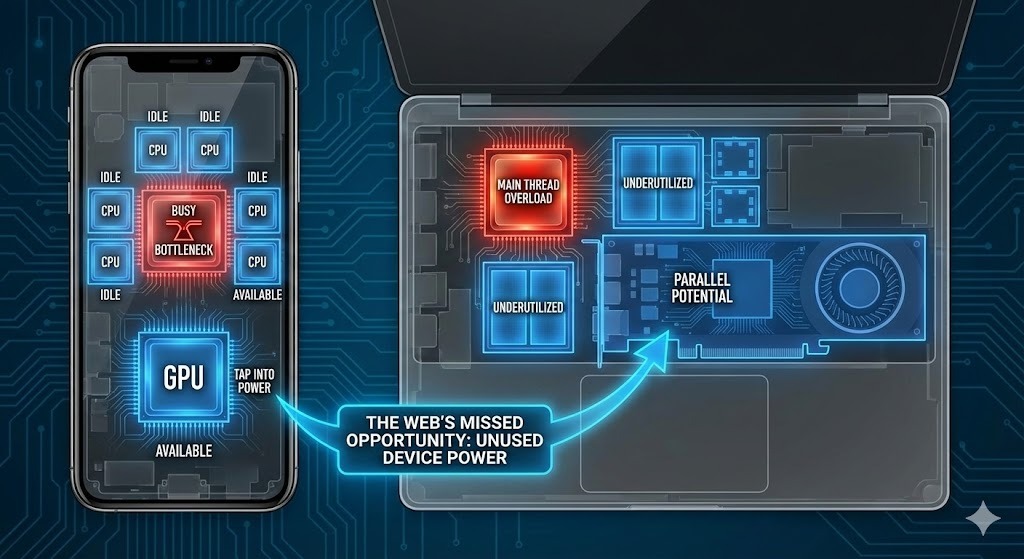

Today, the primary struggle is the CPU Bottleneck on the client's device. JavaScript execution, React hydration, complex state updates, and intricate UI logic all demand processing power, and they all run on the browser's single main thread. When this thread is busy, the UI freezes, user interactions are delayed, and the perception of speed evaporates.

The Bottleneck Shift. Performance issues have moved from the network to the client. Modern apps are no longer slowed by connection speed, but by the single-threaded CPU bottleneck.

This isn't just an annoyance; it directly impacts crucial metrics like:

These issues are dramatically amplified on devices with less powerful processors – which, statistically, represent the vast majority of the global mobile market. Your flagship users might be fine, but everyone else is struggling.

The irony of the CPU bottleneck is that most modern devices are packed with untapped power.

Wasted Potential. While your app struggles on a blocked main thread, vast computing resources, including multi-core CPUs and the GPU, sit idle on the user's device.

Modern smartphones, tablets, and laptops come equipped with:

Yet, your single-threaded JavaScript application is only using a tiny fraction of this available horsepower. It's like having an eight-lane highway but only driving in one lane while the others sit empty. This inefficiency is a major missed opportunity for truly instant experiences.

This is where Client-Side Compute steps in. It's the strategy of intelligently moving complex, non-UI-blocking tasks, such as large data transformations, heavy numerical computations, sophisticated animations, or even parts of your React rendering process, off the single main thread and onto these underutilized multi-core CPUs and GPUs. The goal is to free up the main thread to focus purely on responsiveness, ensuring a buttery-smooth UI.

You cannot solve a bottleneck you haven't identified. Before applying "Client-Side Compute," you need the Silicon Matrix Report.

The Matrix is a 15-day hardware-aware audit of your actual traffic. It doesn't just tell you a page is slow; it tells you why based on the metal.

Once the Matrix identifies your "Friction Zones," the ReactBooster Engine orchestrates a total architectural shift: Adaptive Execution.

Embracing client-side compute through dynamic orchestration isn't just a technical achievement; it's a strategic business advantage:

The performance bottleneck has undeniably moved from the network to the client-side CPU. Trying to solve 21st-century performance challenges with 20th-century single-threaded approaches is a losing battle.

The future of web performance isn't about simply making code smaller; it's about making execution smarter. By harnessing the full power of modern devices through intelligent client-side compute and dynamic orchestration, React applications can finally deliver on the promise of instant, flawless experiences for every single user, everywhere.

Ready to unlock your app's full potential and lead the next wave of web performance? Discover how ReactBooster is revolutionizing your webapp speed.

Uncover the untapped hardware headroom on your users' devices. Our Silicon Matrix Report identifies exactly where your architecture redlines and calculates the projected ARR you can reclaim by eliminating main-thread jank.